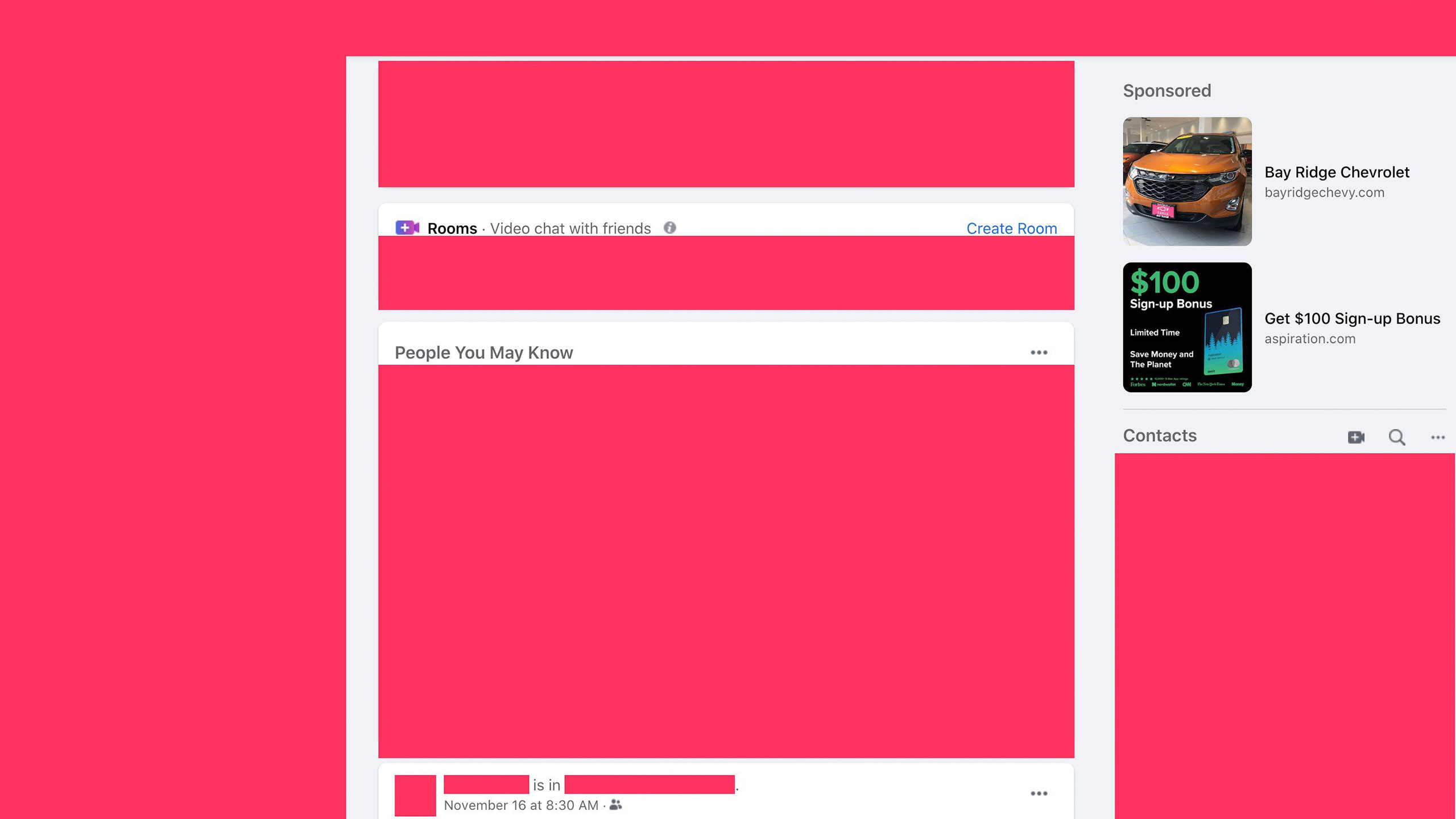

Like traditional broadcasters, social media platforms choose—through their algorithms—which stories to amplify and which to suppress. But unlike traditional broadcasters, the social media companies are not held accountable by any standards other than their own decisions on the types of speech they will allow on their platforms. And their algorithms are not transparent. Unlike on the evening news broadcast, no one can see what they have decided will be the top story of the day. No two people see exactly the same content in their personalized feeds.

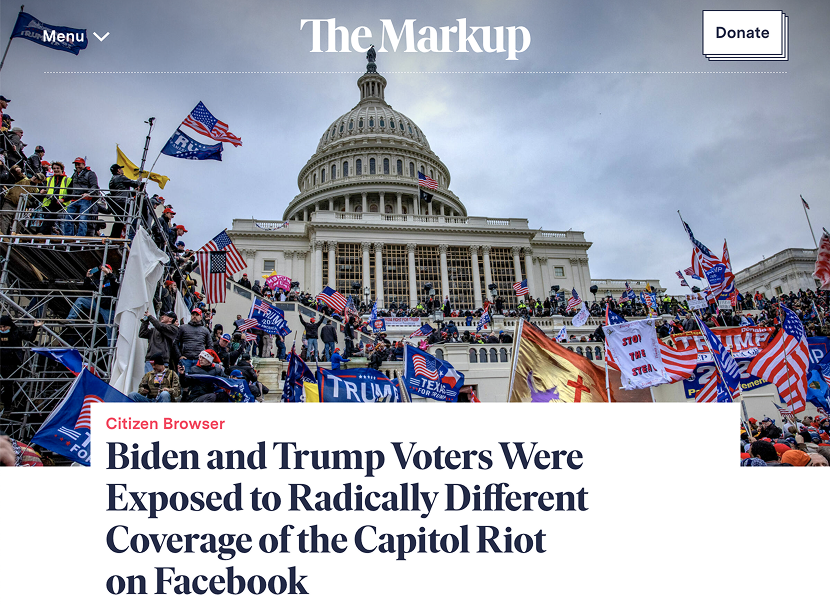

This raises important questions, as one in five Americans say they get their political news primarily through social media. Online content is known to sway elections, influence public health, and sometimes even be the source of political violence.

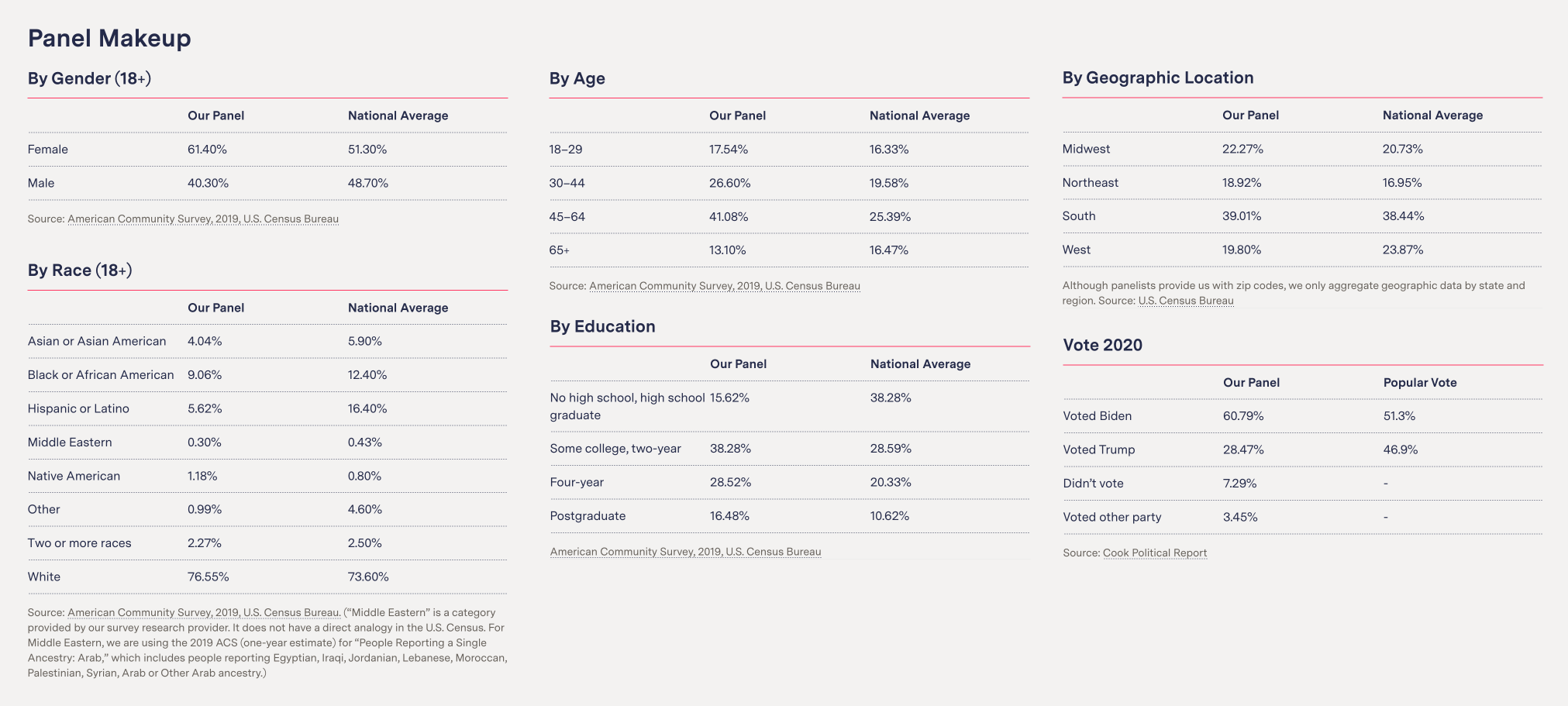

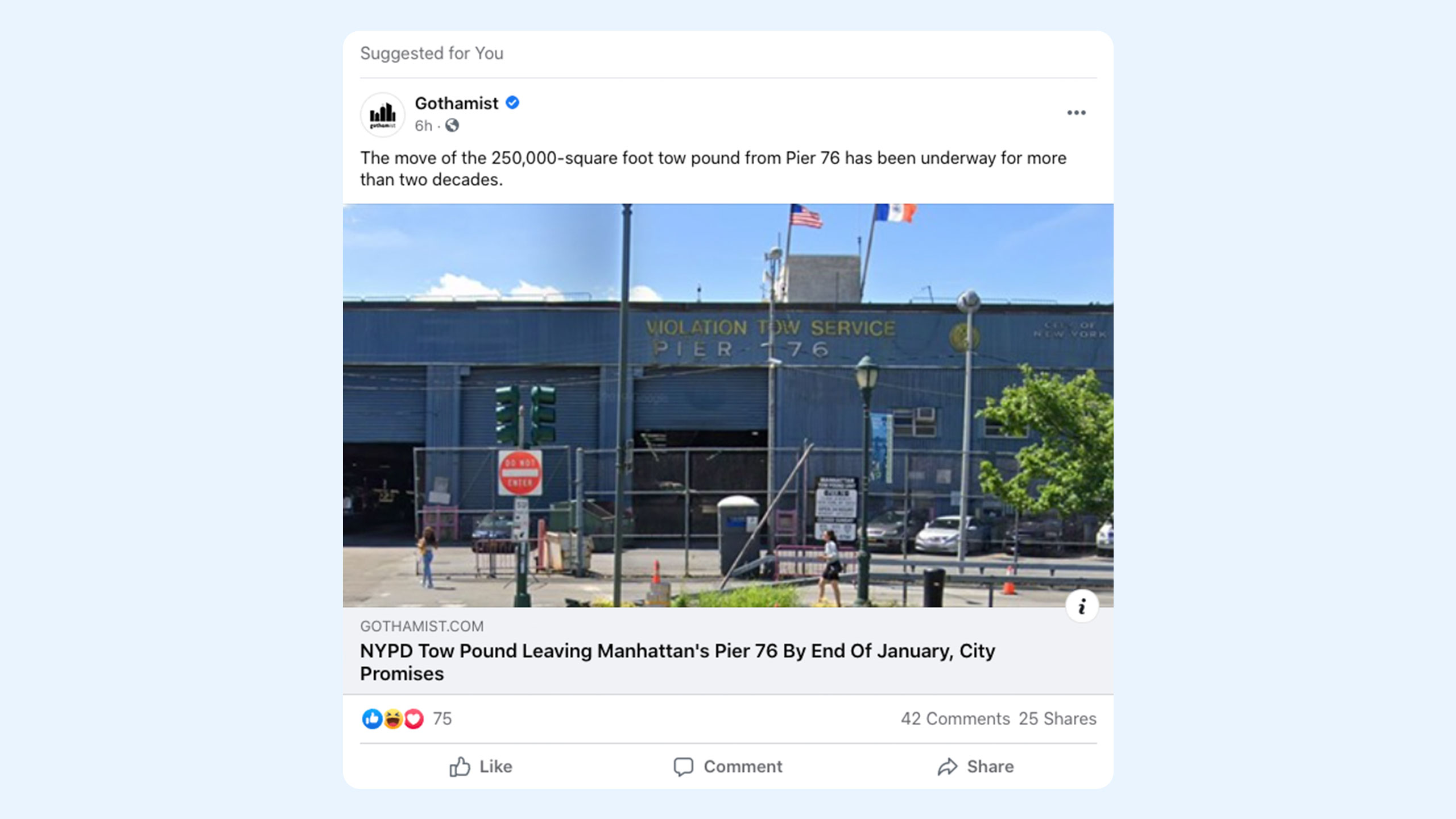

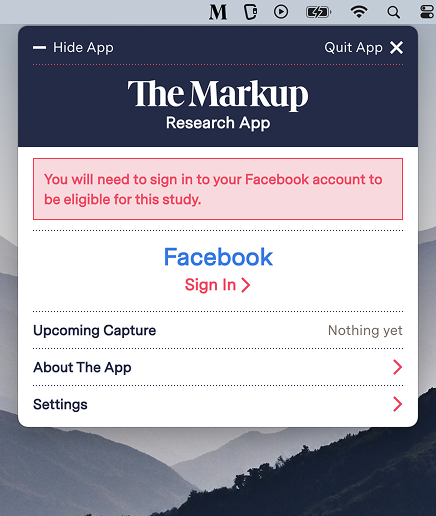

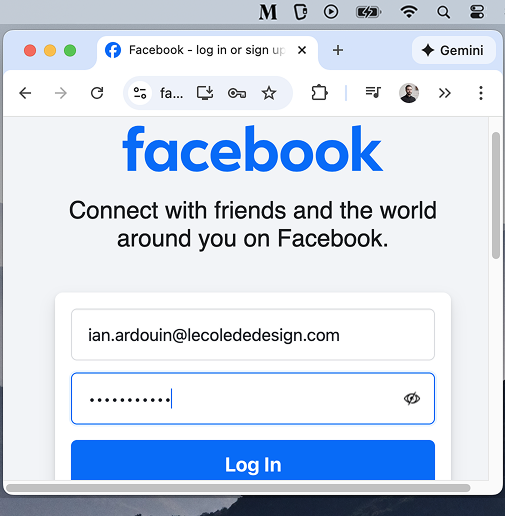

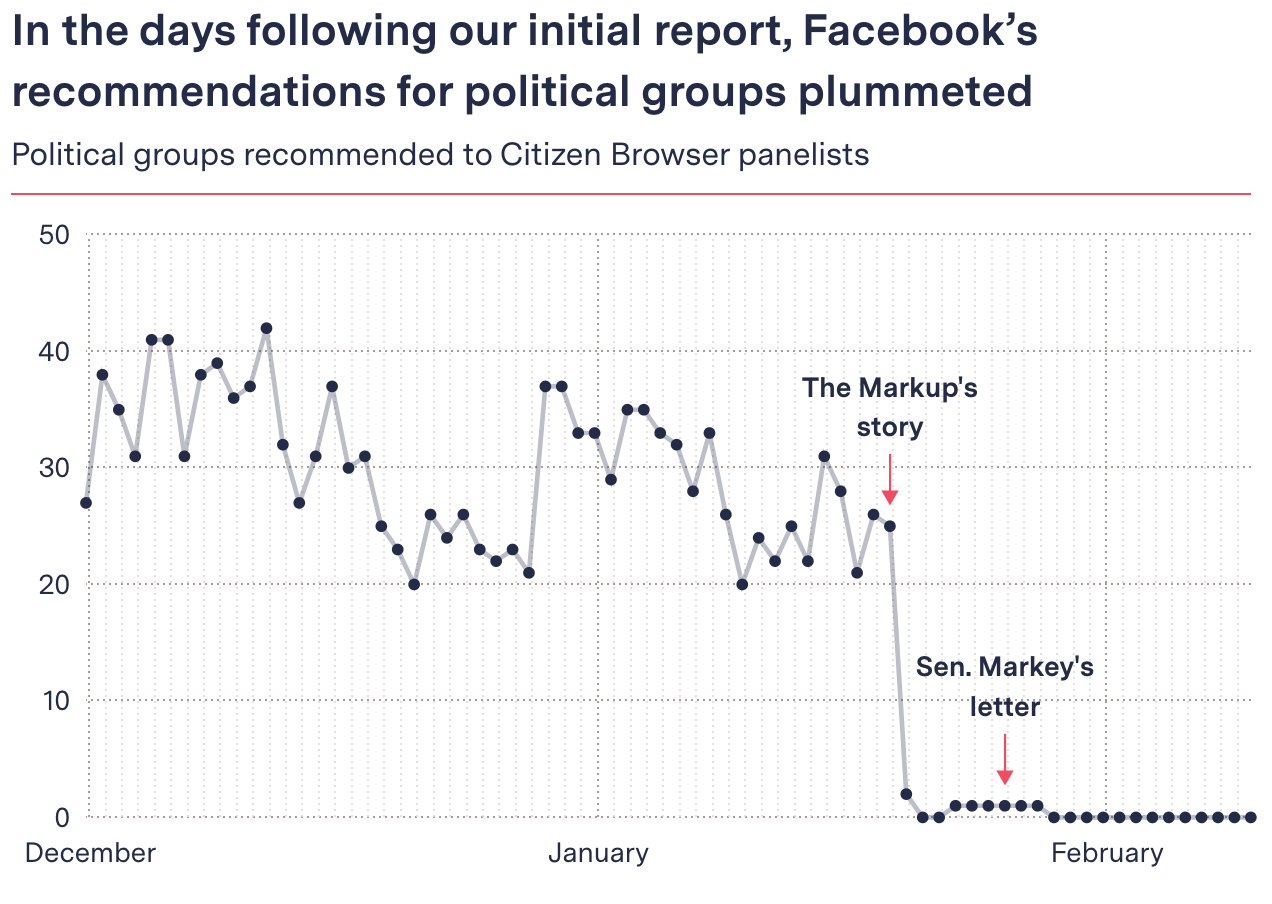

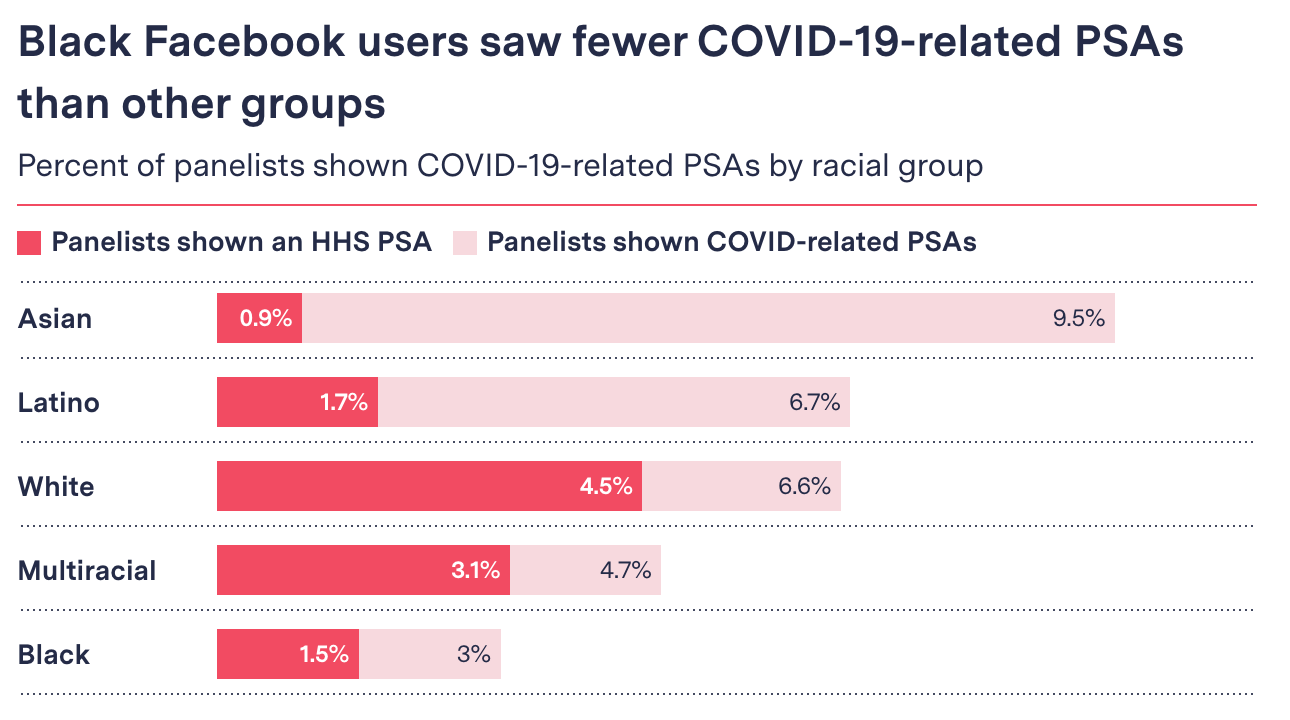

In this context, nonprofit publication The Markup set out to shed a light on the news and political content that Facebook algorithms push to the American public. This study, called Citizen Browser, brought together a panel of 1,000 participants, who were paid to record the content appearing in their Facebook feeds. The resulting data, combined with the participants' demographic information, enabled the first investigation of its kind into the political biases of social media algorithms.

Under the supervision of data journalist Surya Mattu, and in collaboration with designer Sam Morris, I was commissioned to build the frontend application for Citizen Browser.